The MVP Was the Easy Part

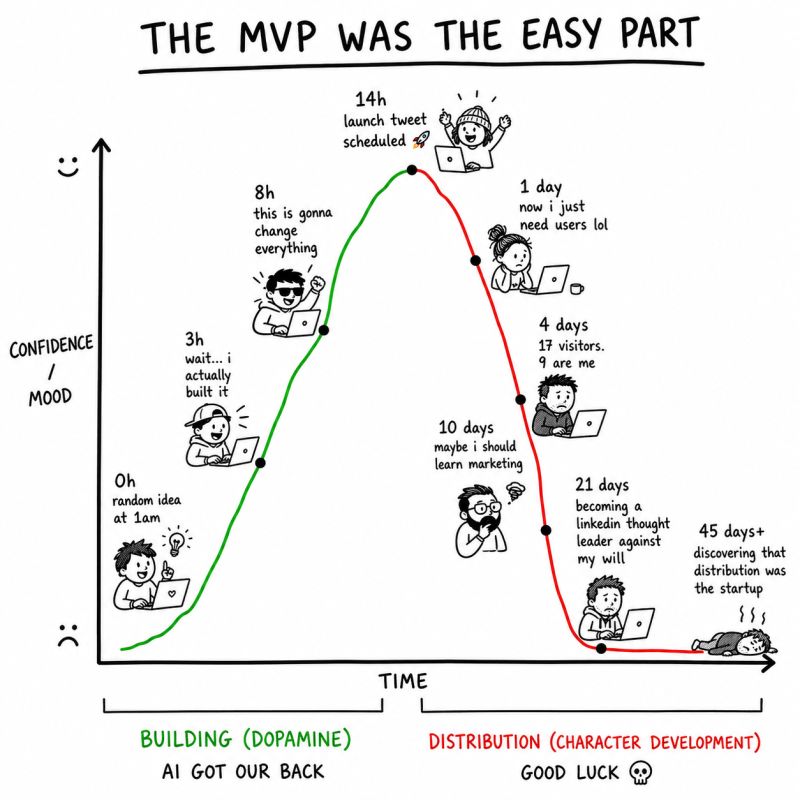

Steve Blank has been teaching Stanford’s Lean LaunchPad for sixteen years. This year, for the first time, teams walked in on day one with MVPs that looked finished, not rough prototypes, not wireframes, but finished-looking products. “I’ve been writing about how AI is going to change startups, but seeing 8 teams actually implementing it was mind blowing,” he admitted. What previously took weeks to build now appeared almost overnight. Blank’s conclusion? “After the class, as the instructors sat processing what just happened, we realized there’s no going back.” He’s right, but he may be understating what we’re going back from. The friction AI has now removed wasn’t just annoying; it was doing a job.

When building was hard, weak assumptions had somewhere to get caught. A team had to spend real time, real money, and real energy turning an idea into something testable. That process created natural points of resistance, those moments when someone might pause and ask whether the problem was actually real, whether users genuinely cared, whether the customer was clear. The irony is that AI has cleaned up the mess, but in doing so it may have removed the mechanism that made the mess useful.

Jim Hornthal, of the Berkeley-based Haas School of Business, commenting on Blank’s post, put it simply: “Product development is no longer the primary bottleneck.” Teams can produce functional MVPs in days. But the hard constraint, he notes, hasn’t changed at all, it’s still customer discovery, validation, and the search for a repeatable business model. The “AI that still matters most,” as he drily puts it, is “Actual Interviews”. In his reply Netherlands-based Joost Okkinga added a key observation that sharpens the whole problem: “AI makes teams feel further ahead than they are. The product artefact improves faster than the evidence base. So the discipline needs to move upstream: map assumptions first, then use AI to accelerate the right tests.”

A startup founder can now generate a landing page, a prototype, a pitch deck, a feature roadmap, a user onboarding flow, and a full set of customer personas before they have properly tested whether the key assumption is true. The results may look sophisticated; it impresses casual observers, and it may even impress the founder themselves. But underneath it the core thinking may still be fragile, and now thanks to AI it’s harder to see that, because the surface is so tidy and clean looking.

Credit: Dmitry Trofimets (https://www.linkedin.com/in/dtrofimets/)

When Gaps Become Outputs

There is a subtler version of the same problem that most teams discover the hard way. When AI becomes the most frequent reader of your instructions, documents, and context, the assumptions you never thought to write down stop being harmless gaps and start becoming inputs. A human colleague fills in what is missing from shared experience, background knowledge, or through a quiet word across a desk. A model does not ask for a discussion. It works with what it is given, and where something is missing it may not pause; it proceeds. As a result the gap does not stay a gap; it is baked into the output. This is why working with AI does not just reward precision. It also exposes every step you assumed did not need saying.

Research-Looking Is Not Research

The same dynamic is playing out in scientific research, and in some ways it’s more alarming there. A recent paper on AI-generated research “AI for Auto-Research: Roadmap & User Guide” describes systems that can produce a complete paper including idea generation, literature search, code, experiments, charts, manuscript, simulated peer review, and rebuttal all for as little as $15. One system ran for 228 hours, used 11.4 billion tokens, and produced 100 papers. Another reportedly ran more than 20 GPU experiments overnight and improved a draft score from 5.0 to 7.5 through automated review and revision loops.

The striking thing isn’t the $15 figure. It is the paper’s deeper warning: a research paper can now carry a clean title, a polished abstract, organised sections, good-looking figures, citations, experiments, and a confident conclusion, while the science underneath remains fragile. The code may run while testing the wrong thing. The idea may sound original until someone tries to implement it. To quote the paper’s authors: “The core challenge is therefore no longer whether AI can produce the forms of research, but whether it can preserve the substance of research: evidence, judgment, provenance, and accountability.”

In both cases, from startups to research labs, it’s clear that AI is lowering the cost of producing something that looks complete while raising the cost of knowing whether it actually is fit for purpose. The bottleneck doesn’t disappear; thanks to AI it moves, from production upstream to validation, and it becomes harder to see because the downstream MVP or scientific paper no longer carries the obvious marks of imperfection that used to signal it.

Marketing Activity Is Not Evidence

The same confusion can show up in marketing, where AI agents can generate activity faster than teams can interpret whether that activity means anything commercially. In the crypto gaming sector, one of the first campaigns using AI agents has delivered results for Whale.io.”This MCP campaign featured over 15 AI agents that collectively generated more than $900k in volume,” reported igaming expert Adar Ziv this week. “While some use Claude and Cursor to find bugs in smart contracts, break bridges, and earn bug bounties, others have taken a more entertaining route,” he added.

And at first glance, that looks like evidence of success, that a well-designed incentive campaign can produce high transaction volume. But in this case, the key question is not “did the agents produce volume?”, the better question is: “what did that volume prove?” Did it reveal durable demand for agentic igaming, or did it show that agents can be directed to move capital rapidly inside a nicely designed incentive loop? Both are interesting, but they are not the same game. The danger is not that AI gets things wrong. It is that AI produces valid-looking evidence at speed, allowing teams to mistake accelerated activity for validated demand, or business value.

Mission-Ready Is Not Flight-Ready

Space technology is where this problem becomes most unforgiving, and where the stakes of misreading an AI-generated output are hardest to recover from. In ordinary software, a weak assumption may surface as churn, wasted roadmap time, or a failed feature — costly, but usually recoverable. In space, the same kind of hidden dependency can become a missed launch window, an expensive redesign, a failed payload, or a lost mission entirely. NASA’s own systems-engineering guidance warns that systems can “pass verification but fail validation,” and its modelling and simulation standards require assumptions, limits of operation, and uncertainty to be made explicit before decision-makers rely on simulation outputs. That is a formal acknowledgement of something engineers have long understood: a model can be internally consistent, well-documented, and technically impressive while still depending on assumptions that have not been properly tested.

If AI can help space tech teams generate cleaner mission plans, simulations, technical documentation, and design options earlier in the process, it can also make those plans look more compelling before the load-bearing engineering assumptions have been stress-tested. The simulation runs, the outputs look plausible, the documentation is polished, and funding is being sought. But coherence is not the same as correctness. A model can look rigorous precisely because it is self-consistent, while still being wrong in the way that matters. In space, discovering that distinction late is not a sprint retrospective. It can be an expensive failed mission. Surfacing assumptions before building on them is therefore not a methodology choice. In space, it is an engineering necessity. The first obligation is not to pick the right assumption to test, but to surface the assumptions behind the simulation, mission plan, or design case. You cannot rank risks you have not yet surfaced.

A Stitch in Time

This is where I believe the Needle Framework earns its keep, not as another productivity layer or a better prompt pack, but as a way of forcing the hidden logic into the open before AI accelerates everything built on top of it. Its value is not that it magically knows the right answer. It is that it asks the awkward prior questions: what does this plan depend on being true, what has been assumed rather than evidenced, and which dependency would hurt most if it failed?

AI does not automatically improve judgement. In fact, it can all too easily disguise the lack of it. It can produce a fluent explanation, a polished simulation, a confident-looking research paper, or a finished-looking product before any of the assumptions underneath have been properly tested. That is the real risk: not that AI gets things wrong, but that it makes wrong things look right, faster than ever before. When people are forced to explain their logic step by step, weak assumptions start to show themselves.

The Needle Framework is a discipline for making that happen earlier: before the MVP looks finished, before the paper sounds convincing, before the campaign dashboard fills up, and before the simulation becomes a source of false confidence. Because AI is increasingly good at giving us what we want. The harder task is working out what we actually need.